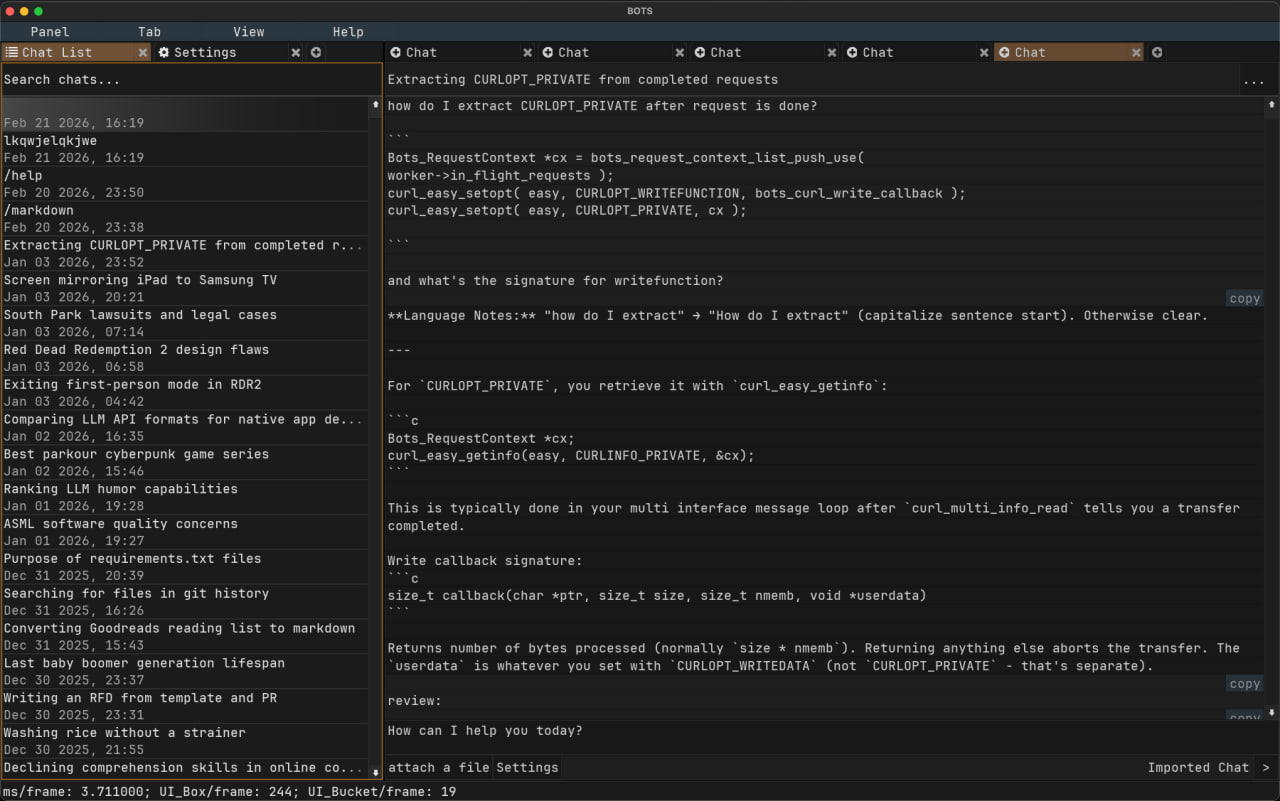

Bots

Native LLM Client

A local-first LLM client that doesn't choke on long conversations. Built on a game engine, not Electron. Scrolls 10k messages at 3ms per frame. Your data stays on your machine in SQLite you control.

$20 once. No subscription.

I build desktop apps and graphics engines the old way: compiled code, no web views, no Electron, no 500MB runtime for a text editor.

Indie developer focused on macOS. Background in graphics programming. Currently shipping an LLM client and working on a graphics engine. Everything built on real rendering backends, not browser tech pretending to be native.

Native LLM Client

A local-first LLM client that doesn't choke on long conversations. Built on a game engine, not Electron. Scrolls 10k messages at 3ms per frame. Your data stays on your machine in SQLite you control.

$20 once. No subscription.

Coming Soon

A real-time rendering engine built from scratch. Vulkan backend. Same UI framework powering Bots. More details when there's something to show.

In development

Notes on building native software, graphics programming, and shipping products.

The origin story: from Casey Muratori's game engine streams to forking raddbg to building a standalone collection of libraries and shipping products.

Long chats. Real search. Yours forever.

macOS now. Linux and Windows coming soon.

Web UIs break on long conversations. Search only finds auto-generated titles. History disappears when you're offline. One provider goes down and you're stuck.

This is a native macOS app that handles all of it. Not Electron — built on a fork of raddbg's UI layer, it renders at 3ms per frame. Your conversations live in a local SQLite database you own and control. Bring your own API keys — any OpenAI-compatible endpoint works.

Local-first. No cloud. No account. No subscription — $20 once, updates included.

Requires macOS MACOS_VERSION+. Bring your own API keys. No screen reader support — game engine UI trade-off.

Setup walkthrough and feature overview.

I'm Dima Afanasyev. By day I work as a developer; on the side I'm building this and saving up for a physics degree (optics) in Germany. The plan: save enough to live on while studying, since I can't do both a bachelor's and full-time work. Every sale here goes into that fund.

I'm into graphics programming — the engine underneath this app started as a fork of raddbg, and I plan to keep working on it long-term. I've got a graphics demo in the works using the same engine. The physics degree is partly about getting the applied math foundation I want.

So if you buy this, you're helping fund one developer's education. And you get a fast, local LLM client out of it.